This assignment is due Monday April 18th at 11:59pm.

For this assignment you are expected to identify and eliminate dead code. Dead code is any instruction that does not affect the output of the program (a function, in particular). For DCE (dead code elimination), you will need to use def-use chains to reason about instructions that do not affect the outputs. Remember that the output of a function is not limited to what it is returning.

IMPORTANT NOTE:

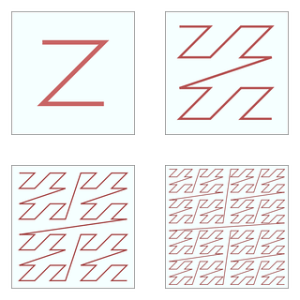

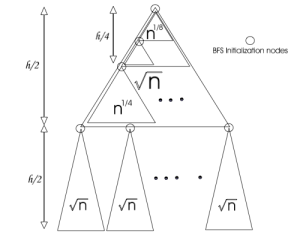

Those who implemented assignment 4 in LLVM, and have relied on the SSA property to find def-use chains with a linear scan of the LLVM-IR, are expected to implement a further requirement for this assignment, whether they are choosing URCC or LLVM for this assignment. That is Common Subexpression Elimination (CSE). An example of this optimization has been illustrated below.

Adopted from [1]

For CSE, you must first do the AVAIL analysis and then reason about expressions that are redundant.

Note 1: Since LLVM does not permit copy instructions, you may need to use the “ReplaceAllUsesWith” method to replace all uses of c with t. Also, since LLVM uses the SSA representation, you might need to insert new phi nodes.

Note 2: You do not need to implement partial redundancy elimination (PRE) for this assignment.

Testing

Your are expected to test your optimization pass by counting the number executed instructions. To do so, you are provided with a test script. Download this script into your URCC repository.

If you are using URCC for this assignment, you can easily run “./test.rb all” to run all the tests. You can also run an individual test by running “./test.rb #{program_name}”.

If you are using LLVM for this assignment, you will need to reimplement Test.test method in the script above by following the current implementation of that method. This is where you need to compile a program and apply your optimization, and instrumentation. You are allowed to use your peers’ test scripts.

The “./test.rb all” command will give you a “urcc_test_results.txt” file that includes the program outputs (along with your dynamic instruction count. Remember to print the instruction count as a single line “INST COUNT: x”, where x is the number of total executed instructions). Include this file in your report, along with your test script (if modified) and instructions on how to run the tests. Do not forget to give a thorough explanation about your implementation.

Note: URCC appears to be failing on generating a correct executable for two of the test cases: sort.c and tax.c. You can exclude these test cases by removing them from the test directory.