Month: August 2018

CSC 253/453 Fall 2018

CSC 253/453 Fall 2018

Dynamic Language & Software Development

Prerequisites: CSC 252 and CSC 254 are required for CSC 453 and recommended for CSC 253. Familiarity with a dynamic programming language such as Python is required for CSC 253.

Crosslisted: TCS 453 (same requirement as CSC 253)

This course studies dynamically-typed programming languages and modular software development. Topics include principles and practice of modular design, functional and object-oriented programming techniques, software engineering concepts, software correctness and reliability, programming tools, and design examples. Ruby is used as the main instruction language. The lessons complement those in traditional compilers and programming languages courses, which focus mainly on statically-typed languages and individual algorithms rather than system design. A significant portion of the assignment is a group project.

Teaching Staff and office hours: Prof. Chen Ding, Fridays 11am to 12pm in Wegmans Hall 3407, x51373. Yu Feng, 5pm to 6pm, Mondays and Wednesdays, Wegmans Hall 3407. Patrick Ferner, 2:30pm to 3:30pm, Tuesdays, Wegmans 2215 (updated 9/11).

Policies for grading, attendance, and academic honesty (updated 8/27)

Grading:

- mid-term and final exams, 15% each

- two written homeworks, 5% each

- assignments and projects, 60%

Assignments are typically handed out on Wednesday and due the following Tuesday midnight.

Preparation (before first class):

“No Silver Bullet — Essence and Accidents of Software Engineering” is a classic paper on software engineering written by Turing Award winner Fred Brooks in 1986. Read the paper (available here if accessed inside the UR network) pages 3 to 5 on the “essential difficulties” of software development and skim the rest of the paper.

“A former member of the SD10 Panel on Computing in Support of Battle Management explains why he believes the ‘star wars’ effort will not achieve its stated goals.” Read the paper (available here if accessed inside the UR network) pages 2 to 4 the section titled “Why software is unreliable.” Which of the “essential difficulties” was Parnas discussing?

More background of this debate, detailed rationales and an illuminating discussion of the ethical issues can be found in another article of Parnas: “SDI: A Violation of Professional Responsibility”. The article does not seem to have a free version online, but you can read it by borrowing the book “Software Fundamentals” (included as Chapter 27) from the textbook reserve for CSC 253/453 at the Carlson Library. The lease is two hours.

Further material will be distributed through the Blackboard web site for students who have registered. Contact the instructor if you have problem accessing the site.

Textbooks (online access at learn.rochester.edu > CSC 253 > Reserves > Materials on Reserve in the Library):

| Software fundamentals : collected papers by David L. Parnas Author: Parnas, David Lorge. Imprint: Boston : Addison-Wesley, 2001. On Reserve at: Carlson Library Reserve Desk 2nd Floor Call Number: QA76.754 .P365 2001 |

Object-oriented Software Engineering

Author: Schach, Stephen R.

Imprint: New York : McGraw-Hill, c2008.

Available at school book store. On Reserve at: Carlson Library Reserve Desk 2nd Floor

| Design patterns in Ruby [electronic resource] Author: Olsen, Russ. Imprint: Upper Saddle River, NJ : Addison-Wesley, c2008. Available through Carlson Library at: Internet |

Programming Languages: Application and Interpretation (http://cs.brown.edu/~sk/Publications/Books/ProgLangs/2007-04-26/)

Copyright © 2003-07, Shriram Krishnamurthi

(Also see Prof. Findler’s course EECS 321 at https://www.eecs.northwestern.edu/~robby/courses/)

Learn You a Haskell for Great Good!

A Beginner’s Guide

by Miran Lipovača (http://learnyouahaskell.com)

No Starch Press, April 2011.

Programming Language Pragmatics, 4th Edition

Author: Scott, Michael L.

On Reserve at: Carlson Library Reserve Desk 2nd Floor

Call Number: CRL PersCpy

Other Materials

| Ruby under a microscope [electronic resource] : an illustrated guide to Ruby internals Author: Shaughnessy, Pat. Imprint: San Francisco : No Starch Press, [2014] Available at school book store. Also on Reserve at: Internet |

| Fundamentals of software engineering Author: Ghezzi, Carlo. Imprint: Upper Saddle River, N.J. : Prentice Hall, c2003. On Reserve at: Carlson Library Reserve Desk 2nd Floor Call Number: QA76.758 .G47 2003 |

Topics:

See schedule

Policies for CSC 2/453

The workload will be heavy. Be sure to read instructions for each assignment and exam carefully, start the assignment early, know where/when to seek help, and work with peers.

Grades will be released periodically to Blackboard, the University’s on-line course management system.

Attendance and Class Participation

Class attendance is mandatory. Please arrive on time. I expect to start at 3:25 sharp, and late arrivals disturb the folks who are already there. You are encourage to ask or answer questions in class. I may call on you just to know what you think. As a general rule, if there’s something you don’t understand, make me stop and explain it. There are probably a dozen people sitting around you who didn’t understand it either, but don’t have the nerve to say so. Likewise, if I’m belaboring something that everyone understands, prod me to move on.

I will assign reading before and after lectures. Reading is mandatory It includes all lecture slides released to Blackboard, and textbook chapters/sections listed on the first slide of each lecture. Keep in mind that the exams include topics covered in class and in the required reading.

Late Submission Policy

A student may have a total of two extra days in all assignments. They can be used as either a one-day extension for two assignments, or a two-day extension for one assignment. A student must inform the TA about the extension before the due time. No other late submission is permitted.

Academic Honesty

Student conduct in CSC 2/453 is governed by the College Academic Honesty Policy, the Undergraduate Laboratory Policies of the Computer Science Department, and the University’s Acceptable Use Policy for Information Technology. I helped to draft some of the descriptions. I believe in them strongly, and will enforce them aggressively.

The following are details specific to CSC 2/453.

Exams in CSC 2/453 must be strictly individual work.

Collaboration on programming assignments among team members is of course expected. Collaboration on assignments acrossteams is encouraged at the level of ideas. Feel free to ask each other questions, brainstorm on algorithms, or work together at a whiteboard. You may not claim work as your own, however, unless you transform the ideas into substance by yourself. Among other things, this means that you must leave any brainstorming sessions with no written or electronic notes—only what you carry in your head.

If you use the work of others (e.g., you download a function from the web at the last minute so that you can get the rest of your project working), then (1) either you must have the author’s explicit permission or the material must be publicly available, and (2) you must label what you copied, clearly and prominently, when you hand it in. You will of course get points only for the parts of your assignment that you wrote yourself.

To minimize the temptation to steal code, all students are expected to protect any directories or on-line repositories in which they do their work.

For purposes of this class, academic dishonesty is defined as

- Any attempt to pass off work on an exam or quiz that didn’t come straight out of your own head.

- Any collaboration on assignments beyond the sharing of ideas, unless the collaborating parties clearly and prominently explain exactly who did what, at turn-in time.

- Any activity that has the effect of significantly impairing the ability of another student to learn. Examples here might include destroying the work of others, interfering with their access to resources, or deliberately providing them with misleading information.

Note that grades in CSC 2/453 are assigned on the basis of individual merit rather than relative standing, so there is no benefit—even a dishonest one—to be gained by sabotaging the work of others.

I work under the assumption that students are honest. I will not go looking for exceptions. If I discover one, however, I will come down on it very hard. Over the past few years, I have submitted about a dozen cases to the College Board on Academic Honesty. All resulted in major penalties for the students involved.

Character Co-Occurrence in Shakespeare’s Sonnets and the Bible

This past summer, I’ve had the pleasure of working with Professor Chen Ding, Lucinda Liu, and Professor Daniel Gildea on an all-timescale co-occurrence analysis algorithm for optimizing microprocessor storage usage. This analysis can efficiently provide co-occurrence ratios for multiple timescales, which are time allowed for or taken by a process or sequence of events. The timescale is used to determine the likelihood of two events occurring together within that time window, giving a co-occurrence ratio. The developed algorithm is unique in its ability to calculate co-occurrence ratios for multiple timescales more efficiently than a brute-force method.

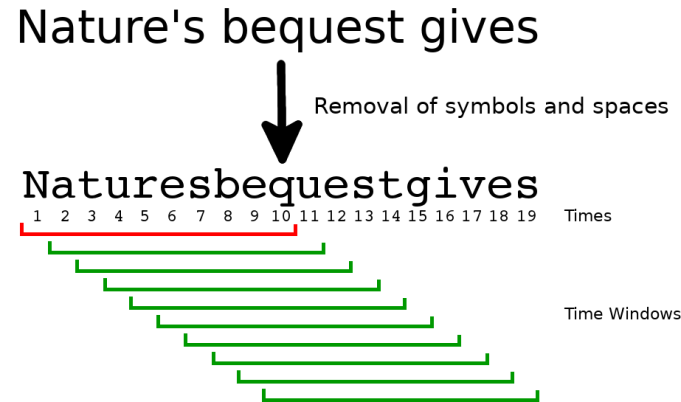

An initial pass of a trace of objects stores the distance between different elements of the trace. These distances can be used to see where gaps between trace objects are to wide to fall in the same time window, giving us a count of absence windows. The beauty of this algorithm is that the expensive operation, the initial pass of the trace, only needs to be done once to calculate timescale analysis of all pairs in a trace. By counting absence windows, joint and single frequencies can be determined.

We define the co-occurrence ratio to be the joint frequency of the pair divided by the single frequency of a given element. The given element is known to be present in a time window, meaning that the co-occurrence ratio represents the probability of finding the other element in the same time window. This makes the co-occurrence ratio a conditional probability.

This analysis was initially intended for use in optimizing processor cache utilization, by placing frequently co-occurring memory objects together in memory. The precise explanation can be found this paper. However, this concept can be extrapolated to any trace, whether it be a trace of words, or music notes, or pixels in a photo.

We applied our algorithm to Shakespeare’s Sonnets and the King James Bible, treating each character as a memory access taking one unit of time, skipping symbols and spaces. The letters, ignoring capitalization, are treated as memory accesses. We used timescales of 2, 5, and 10 to look at adjacent pairs, what one hand can type, and what two hands can type. Below are links to the data files and analysis of Shakespeare’s Sonnets and the King James Bible for multiple timescales using our algorithm:

| Shakespeare’s Sonnets | Timescale 2 | Timescale 5 | Timescale 10 |

| King James Bible | Timescale 2 | Timescale 5 | Timescale 10 |

The data can be interpreted as the conditional probability of seeing the second character given the first character is present in a given time window. Each individual character appears the same number of windows as the timescale size. So, if a second character is always adjacent to the first character on the same side the maximum co-occurrence ratio should be (timescale – 1)⁄timescale. The reason for this is that there will be one window that includes the first character but not the second.

For example, the character ‘q’ should always precede the character ‘u’. So, given a time window with ‘q’ the probability seeing ‘u’ in the same window should be at least (timescale – 1)⁄timescale. This means that all pairs with a co-occurrence ratio above that value have the second character on both sides of the first character like in the case of all word containing the substring “eve”. This is why for a timescale of 2 in Shakespeare’s Sonnets the pair (q, u) can be beaten by pairs like (x, e), but with larger timescales u’s from other words boost the (q, u) co-occurrence to put it in the lead.

An example from Shakespeare’s Fourth Sonnet. Note how with the pair (q, u) the first window contains ‘q’ but not ‘u’ while the other nine windows have both characters. Also, the ‘q’ present in this trace appears in the same number of windows as the timescale size.

It is important to note that this method of co-occurrence analysis is not tallying frequencies. So when the pair (q, u) has the highest co-occurrence ratio, it does not mean that is the most common pair. Since the character ‘q’ is rarely used in the English language, its single occurrences are low, while its joint occurrences with the character ‘u’ are high. This is why for all the tests above many of the pairs with the highest affinities are uncommon consonants paired with a much more common vowel, like ‘e’. In both texts where the timescale 10 analyses were used, the top 10 pairs, excluding (q, u), have the character ‘e’ as a second character.

The data collected through this analysis has numerous uses outside of literature. The co-occurrence information can be used to evaluate the querty keyboard layout, or be used in speech therapy. Both the texts used here are from the same era, the turn of the sixteenth century into the seventeenth. It is possible that the character co-occurrence ratios could change with modern text or transcribed speech. Other than analyzing text, this analysis can be applied to images or videos for facial or object recognition.

I hope that this interesting re-application of memory layout analysis was entertaining to read about and I’d like to thank Professor Chen Ding, Lucinda Liu, and Professor Daniel Gildea for working with me on this project.

Image Sources: https://upload.wikimedia.org/wikipedia/commons/f/f6/Sonnets1609titlepage.jpg

https://upload.wikimedia.org/wikipedia/commons/e/e8/King-James-Version-Bible-first-edition-title-page-1611.png