Abstract:

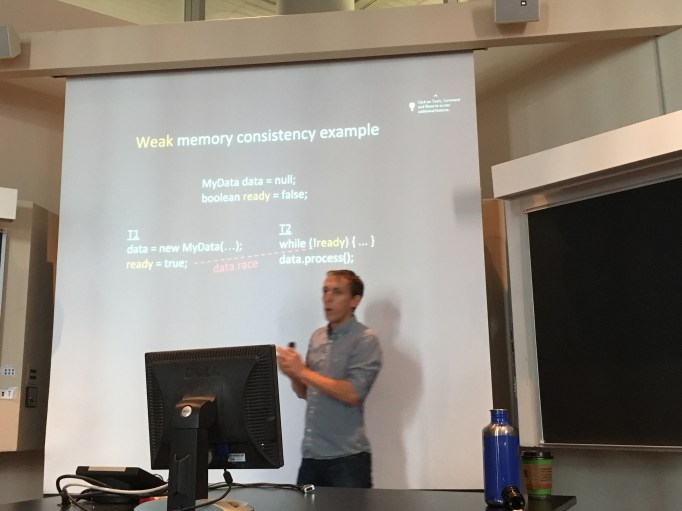

With the end of Dennard scaling, software must become more parallel to exploit microprocessors that offer more, instead of faster, execution contexts. General-purpose languages and systems provide the shared-memory abstraction, which is powerful and easy to understand — but achieving both correctness and scalability is notoriously hard. A fundamental problem is that shared-memory languages and systems provide weak or undefined behavior for all parallel programs that are not perfectly synchronized.

This talk motivates the necessity of putting languages and systems on a solid foundation by providing strong end-to-end memory consistency models. I’ll describe our ongoing software- and architecture-based approaches for providing strong memory consistency. An important element of our solutions is that providing strong consistency enables us to rethink the design of other system features such as cache coherence. Overall, our work suggests that practical strong consistency is achievable and that it offers benefits that have not previously been realized.

Bio:

Michael Bond is an associate professor at Ohio State University. He did his Ph.D. and a postdoc at UT Austin, advised by Kathryn McKinley. In collaboration with his Ph.D. students and others, Mike’s research addresses the challenges of achieving reliable and scalable parallel systems. His work has received an OOPSLA Distinguished Paper Award, OOPSLA Distinguished Artifact Award, and ACM SIGPLAN Outstanding Dissertation Award.