Cache is used in all general-purpose computing systems and the subject of numerous studies. This 40-page paper is the foundation as timeless as it is specific and comprehensive. It begins with the outlook that (1) computer systems will increase in size and speed, (2) the demand on storage systems will increase, and (3) the increasing demand for speed and capacity “cannot be fulfilled at an acceptable cost-performance level within any single technology”.

Consider the question that you were a member of a group at the crust of the new era. What questions will you answer that will have a lasting value? What’s distinct about the new problem? What’s common in most efforts when addressing the problem?

The real problem is the automatic management of cache memory of an arbitrary size. In contrast, CPU registers represent the problem of explicit control and fixed size.

The paper examines LRU, MRU, OPT, LFU, LTP (transition probability), and Random and finds a common property (inclusion)and a common algorithm (stack simulation) to measure the miss ratio for all cache sizes in a single pass. Any management method that observes the inclusion property is a stack algorithm and can be measured by stack simulation.

The cache size is captured by the stack distance. It is the key metric of memory hierarchy. It covers all cache levels and sizes. Miss ratio is monotone, no Belady anomaly.

Formalization of the inclusion property and stack simulation.

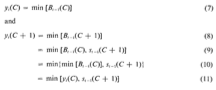

Below shows the notations.

yi(C) is the item to be evicted when the size C cache is full and a new item is accessed. Bt-1(C) is the content of size-C cache at time t-1. min[ ] is the least priority item.

A replacement algorithm is a stack algorithm if and only if y_t(C+1) = s_t-1(C+1) or y_t(C+1) = y_t(C)

OPT

Belady described the MIN algorithm for a given cache size. OPT describes a stack algorithm and a two-pass implementation. 3 pages in main body and 6 pages in appendix.

A comment on Feb 4th’s course on Meyer’s book. As it mentioned, the memory access speed has a hard limit, the speed of light. But does the memory technology has hard limit? More specifically, can we build infinitely small memory system with infinitely large capacity?

At any moment, there is a technology limit on the feature size, i.e. how small a transistor gate has to be. The limit is temporary and moving with time. But at the any given time, it is a constant. At this time, a hierarchy can expand the memory capacity beyond this temporary limit while providing the same fast access. What do you think?