This slideshow requires JavaScript.

On Friday I received the Frederick D. and Susan Rice Lewis College Award for Undergraduate Teaching and Research Mentorship. It was delightful to see everyone including many of my current and former students. I prepared and delivered the following speech:

Thanks to Fred and Susan Lewis for creating this award and for being here, to Dean Runner, Mike, Cesar, and Chris for your generous words, to Department Chair Dr. Dwarkadas for the nomination, and Ms. Logory for organizing today’s event.

Thanks to the terrific computer science department, to our staff, especially the tireless work of Eileen Pullara. Countless times I have asked her: can I hire another undergrad. It is a community of support. I’m standing on the shoulder of not just the department, but also the university community and the scientific community.

Let me first state my teaching philosophy by quoting the Chinese philosopher Confucious from 2000 years ago, who he himself was a teacher. He said, and I translate: Achieve independence by making others independent. Achieve prosperity by making others prosperous.

As university faculty, we are Stewards of Knowledge. My colleague Randal Nelson once compared education and business, he said, I quote: “The fundamental currency of a university is knowledge; while that of a corporation is capital. Both traffic information, money, and talented human beings, … but the bottom-line metric differs. ” Money, resource and capital have limits. It is often called a zero sum game. Knowledge knows no limits.

In teaching, I specialize in programming languages and systems. I show students a cartoon. This is an interview room at Ikea. The text in black instructs the nervous interviewee: “make a chair and take a seat”, the text in red is for my class “invent a language and talk.”

Interesting problems are abound: How do we describe a language when we do not yet have a language? Similar circular problems in Cesar’s research then and Mike’s research now in memory allocation. Similarly in my Confucious motto: independence and prosperity are problems that cannot be solved in isolation.

In most courses I teach, students learn by doing. Alan Perlis, the first recipient of Turing award in 1966, said “You think you know when you can learn, are more sure when you can write, even more when you can teach, but certain when you can program.” To understand a programming language, you must not just learn it but to build it.

How do we create knowledge?

We discover it through science, first by measuring. We are in the dark and remain so until we measure. The Unite State’s response to the coronavirus is hampered by a president who communicates by tweets, by politicizing the response to the crisis, and most damaging by anti-science arrogance and idiocy.

The first step it missed and still missing is testing. Without testing there is no science, and there is no knowledge. This ignorance puts medical personnels and emergency responders and their family in danger and we begin to see disproportional fatalities in poor city neighborhoods, indigent population and people with disabilities.

This Wednesday I was most proud to see our University News photo: our medical school colleagues return from helping in NYC. In teaching, as in medicine, we work with knowledge and skill.

Like our medical colleagues, we work together. We collaborate.

The best teacher-student relation is symbiotic. I confess when I first taught computer organization, the evaluation wasn’t good. A common criticism, believe it or not, was that the professor gave too many extensions. The next year, I set a schedule and followed it. Then the department machines had to be shut down for days due to the cooling water system repair in the building. I held the line and told my students that we don’t need extensions for lack of cooling, not in the middle of the winter, not in Rochester. I received high scores for that course.

At a research university like ours, teaching keeps a close pace with technology.

This semester I am teaching new material of machine-checked proofs.

Knowledge is not just the truth but more importantly the proof. Proof is knowledge about knowledge — how do we know we know.

This is unprecedented material which combines the programming language and the language of logic. (1) Programming encodes reasoning, (2) reasoning verifies programming. (3) With computers, programming automates reasoning. (4) Even more exciting is the new way to collaborate — our reasoning can be combined as our programs can. (5) Most exciting is where our students will go taking the knowledge. Three undergrads and their TA are “programming” a proof for a joint paper with my colleagues and may introduce machine-checked proofs to the database community.

We teach technology. We collaborate to develop technology. We also teach and develop technology to amplify collaborative work.

University of Rochester, its size, not too big and not too small, and its combination of research and liberal arts education, make it a best place to collaborate.

The best collaboration is to inspire and be inspired. Let me take just one minute to give two examples.

Lane Hemaspaandra created the honor course I’m teaching now and he continues to help and model for me in teaching collaborative problem solving.

Chris Tice when he was a student solved an extra credit problem after the assignment was due. So why doing extra work for no credit. He said that he didn’t have time to work on it, but he was interested so he went back once he had time. When a student is not there just for the grade, a teacher is not here just for grading. It is what Hajim said at every commencement, if you love your work, you never have to work.

To summarize, I have started with my motto of independence and prosperity and highlighted three ingredients of a happy life: to collaborate, discover, and inspire. To help you remember, the initials CDI are the first three letters of my university NetId.

I want to thank people who inspired me the most: my longest time colleagues, mentors and supporters Michael Scott and Sandhya Dwarkadas, my graduate advisors late Dr. Ken Kennedy and Philip Sweany, my mother Ruizhe Liu who’s currently battling cancer, for her unyielding courage and support, my father Shengyao, my brother Rui, my dear wife and Rochester graduate Dr. Linlin Chen and our two children Yawen and Shuwen who were both born in Rochester.

Last, let me share a few road trip photos. In 2016, we went to conference in DC, we zoomed by at 90 miles an hour in Pennsylvania but then sit still in traffic the minute we got to Baltimore, but had fun counting the many Rs. Once arrived, undergrads had homework. The book on the table was Michael’s popular textbook, in its fourth edition. At the award banquet, we were the Rochester 9, the following year we had a smaller group and Google flew one of the undergrads out for interview, but in this photo we were the largest group and was so recognized.

This slideshow requires JavaScript.

I received an award for that exact reason — the award for being the largest group attending the conference. Inspired, I told my students: you see — 100% of success is just showing up.

Then it was literally true for that award. Today I feel the same for this award. It is most humbling. Thank you all.

Other than the people I thanked above, I am also grateful for the many who joined the meeting and for the spontaneous comments following the event by my colleagues Michael Scott and Sandhya Dwarkadas and my former doctoral graduates Xiaoming Gu, Chengliang (Cheng) Zhang, Pengcheng Li, and Xipeng Shen. Quoting Dr. Cheng Zhang who was attending from home in Seattle and said that through his work in Microsoft, Amazon and now Google, he collaborate in a team and he work to lift others.

It was most fun, heart warming, and well organized. There wasn’t even problems with the network.

k

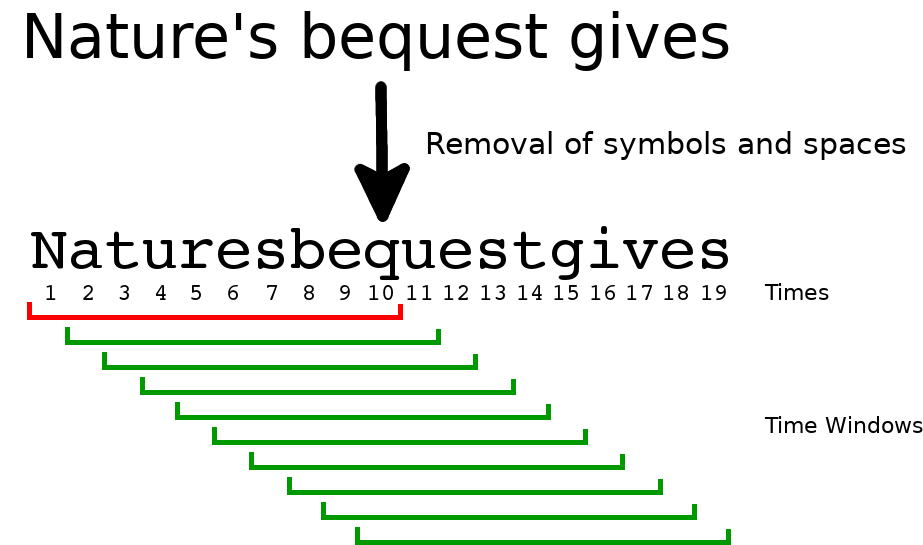

n,” the lower bound of which refers to windows with their end index before their start. This also leads to some mathematical inconsistencies in sections 3.2.1 and 3.2.2.

should instead read