CSC 255/455 Software Analysis and Improvement (Spring 2016)

- Course description

- Course schedule (2nd update, March 26)

- Instructor and grading

- Textbooks and other reading material

Lecture slides (when used), demonstration programs, and some of the reading material will be distributed through Blackboard. Assignments and projects will be listed here.

Assignments:

- def-use in URCC/LLVM

- LVN in URCC/LLVM

- CFG pass in URCC/LLVM

- Instruction counting in GCC/LLVM

- Local value numbering

- Trivia assignment. Read slashdot.org for any current or archived posts. Select any two posts on a topic of either GNU, GCC, or LVM (both posts may be on the same topic). Read the posts and all discussions. Write a summary with 200 or more words for each of the two posts. In the summary, review facts and main opinions people agreed or disagreed. Print and submit a paper copy Wednesday January 20th at the start of the class. Then see me in one of my office hours for feedback on the summary. There is no deadline for the meeting, but the grade is assigned only after the meeting. If you see me before submitting the paper, bring your paper; otherwise, don’t since I’ll have your paper.

Course description

With the increasing diversity and complexity of computers and their applications, the development of efficient, reliable software has become increasingly dependent on automatic support from compilers & other program analysis and translation tools. This course covers principal topics in understanding and transforming programs by the compiler and at run time. Specific techniques include data flow and dependence theories and analyses; type checking and program correctness, security, and verification; memory and cache management; static and dynamic program transformation; and performance analysis and modeling.

Course projects include the design and implementation of program analysis and improvement tools. Meets jointly with CSC 255, an undergraduate-level course whose requirement includes a subset of topics and a simpler version of the project.

Instructor and grading

Teaching staff: Chen Ding, Prof., CSB Rm 720, x51373; Rahman Lavaee, TA. CSB 630, x52569.

Lectures: Mondays and Wednesdays, 10:25am-11:40am, CSB 632

Office hours: Ding, Fridays 3:30pm-4:30pm (and Mondays the same time if pre-arranged);

TA Office hours: Wednesdays 3:30pm – 4:30pm, CSB 720.

Grading (total 100%)

- midterm and final exams are 15% and 20% respectively

- the projects total to 40% (LVN 5%, GCC/LLVM 5%, local opt 10%, global opt 10%, final phase 10%)

- written assignments are 25% (trivial 1%; 3 assignments 8% each)

Textbooks and other resources

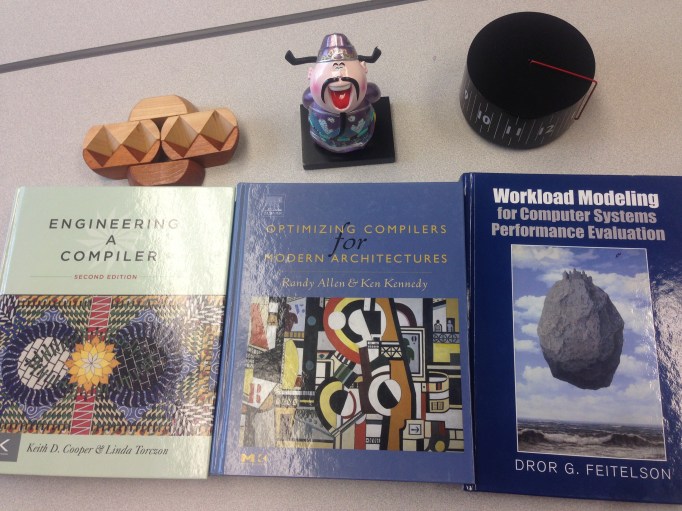

Optimizing Compilers for Modern Architectures (UR access through books24x7), Randy Allen and Ken Kennedy, Morgan Kaufmann Publishers, 2001. Chapters 1, 2, 3, 7, 8, 9, 10, 11. lecture notes from Ken Kennedy. On-line Errata

Engineering a Compiler, (2nd edition preferred, 1st okay) Keith D. Cooper and Linda Torczon, Morgan Kaufmann Publishers. Chapters 1, 8, 9, 10, 12 and 13 (both editions). lecture notes and additional reading from Keith Cooper. On-line Errata